Is the "AI Boom" already over? Tech Valuations Back to Pre-AI Boom Levels [View all]

Last edited Sun Apr 12, 2026, 11:29 PM - Edit history (2)

Check this out.

https://www.apollo.com/wealth/the-daily-spark/tech-valuations-back-to-pre-ai-boom-levels

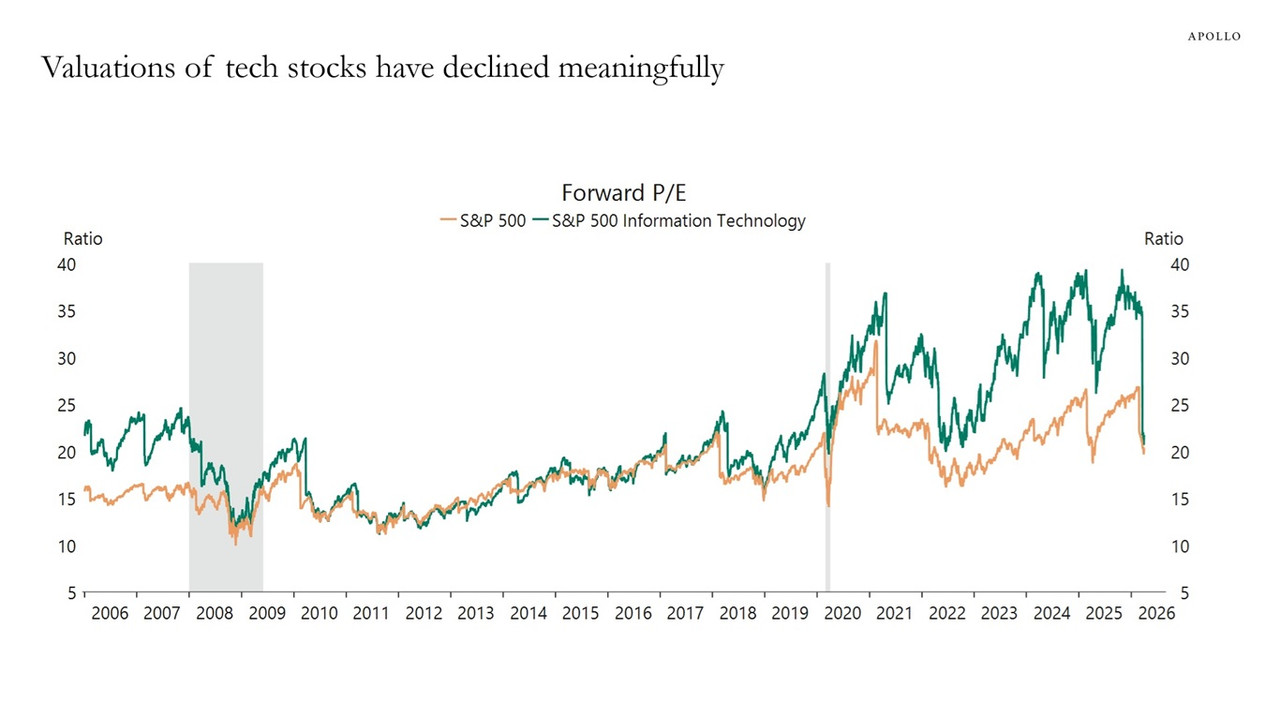

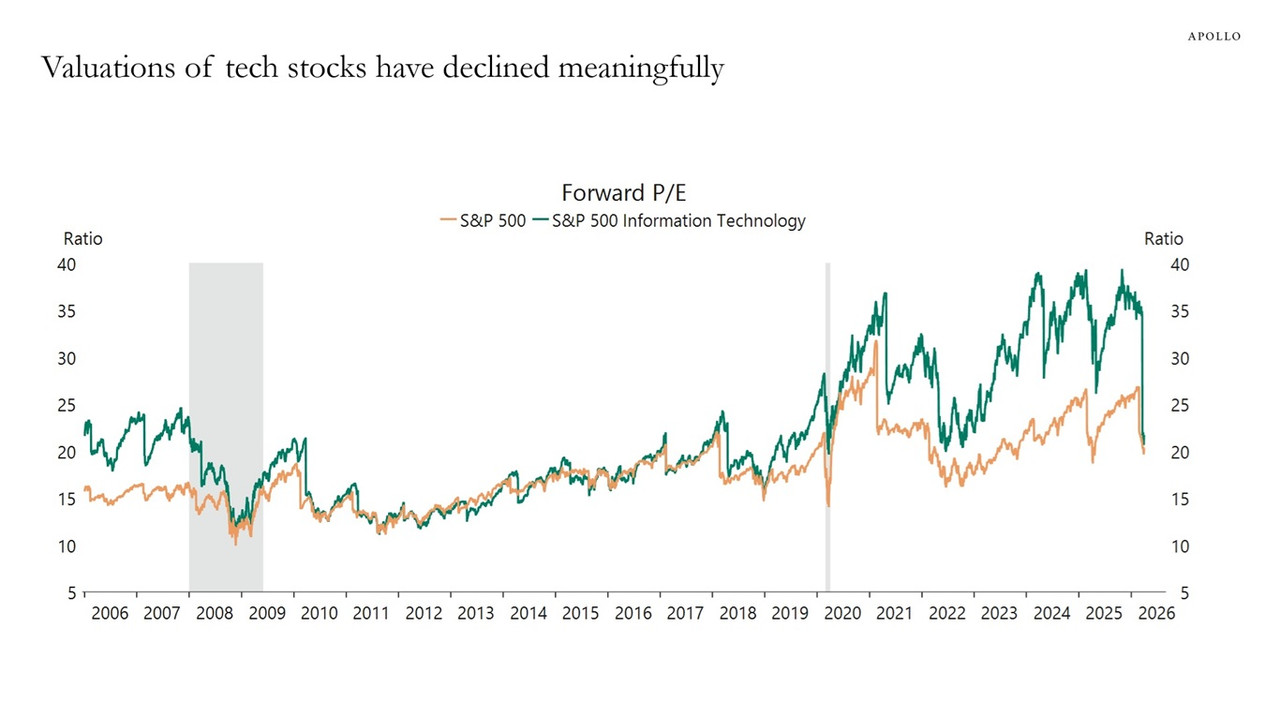

The chart below compares the forward P/E ratios for the S&P 500 and the S&P 500 Information Technology sector.

Tech valuations have compressed from 40x to 20x, and we are back at levels last seen before the AI boom began.

That is all!

Higher resolution PDF (136Kb) of that chart:

https://www.apollo.com/content/dam/apolloaem/pdf/daily-spark/2026/apr/11/dailyspark-2026-04-11-1775830796.pdf

Edit to add: I see a lot of projects on github that are essentially LLM's on home hardware (especially Apple Silicon). I suspect that the "downsizing" of simpler tasks and the innovations coming out of China that are more efficient, will drive some of the mania down. Just how much? Will there be a "winner take all" in the enterprise space? Are there too many competitors all trying to create vertical moats? If so, what's their real value outside of their core business? Who knows?

Companies like Microsoft and Google are savaging their core business in order to become THE AI COMPANY (and yikes, nothing else!)

SECOND EDIT:

PocketLLM

https://github.com/vraj00222/pocketllm

Your AI lives on a USB stick. Plug in. Chat locally. Unplug. Zero footprint.

No install. No cloud. No SSD space wasted. One command: ./launch.sh

PocketLLM is a portable USB toolkit for running local LLMs. It bundles everything — the Ollama runtime, model weights, a chat UI, and conversation history — on a single USB drive. Plug it into any Mac or Linux machine, run one command, and you have a fully working local AI. No install required on the host. On unplug, nothing remains.

Inference speed from USB = SSD. After the one-time model load, we benchmarked 54 tokens/sec on both. See benchmarks.

For non-enterprise tasks, AI will in the near future run locally.

All the monster AI companies competing for a handful of enterprise customers (the current US administration diesn't believe in competition, only grift)